The Challenges with Machine Learning in Threat Detection

The difficulties associated with collecting and curating a real world cyber dataset for machine learning have thwarted attempts to transition threat detection research from a concept into the real world.

Although our goal is primarily to provide behavioral security detections with an advanced collaboration tool, building the SnapAttack platform required us to solve some of the same problems that cyber artificial intelligence (AI) researchers are struggling with.

Want to build more robust threat detections? Check out our eBook: Streamlining the Threat Detection Development Lifecycle with SnapAttack >>

In developing the platform and the datasets residing in it, we have progressed along the same path an AI researcher would follow to build an AI tool for threat detection.

Below we enumerate the steps required to train a machine learning model, and we show how building out the SnapAttack platform is moving us along that path as a corollary of customer feature development.

What Does Threat Detection through Machine Learning Look Like?

The typical process for training a supervised machine learning model is to gather a data set, label it, analyze and curate the dataset, iterate through a train and test process, and then deploy a production quality classification model.

Security professionals attempting to build a real-world threat classification model tend to immediately get bogged down by the first and second steps.

Data for training machine learning (ML) models needs to look just like the data the model will see when it is deployed, and the dataset must be extremely large.

The requirement that the dataset be large and realistic prevents smaller organizations from using their own data (too small) or synthesizing a dataset (too artificial). Collecting the requisite network and host logs from a third party for research or commercial use is nearly impossible because of privacy and security concerns.

Learn more about AI in cybersecurity from our blog: “Utilizing AI in Cybersecurity: Top Trends for Enterprises in 2022″ >>

Balancing a Cybersecurity Machine Learning Dataset

Should those challenges be overcome, the problem of balancing the dataset rears its ugly head. Balance for an ML dataset means that the dataset has similar amounts of examples of the items one wants to detect and examples of everything else that might be seen in the data.

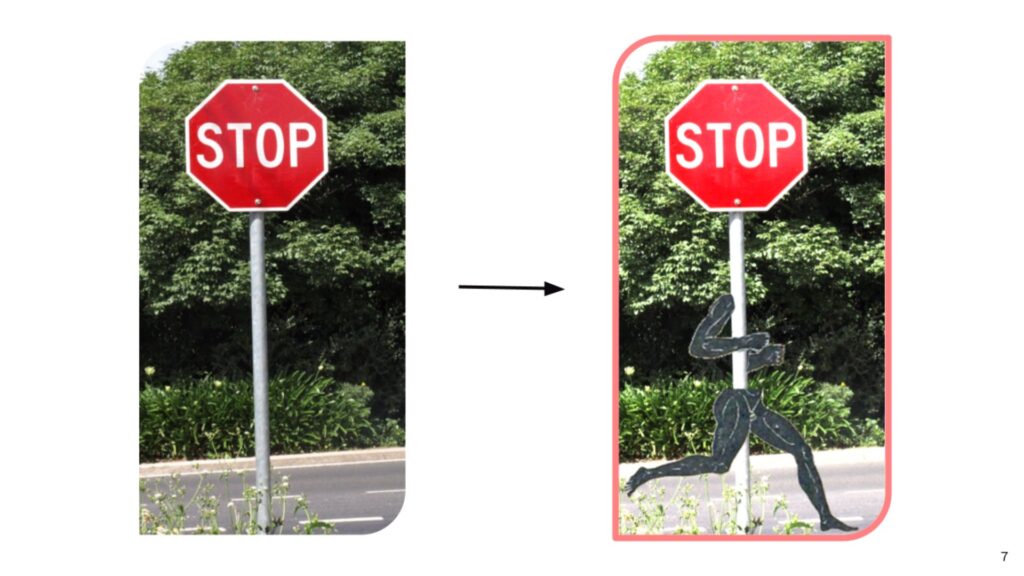

For example, when training a self-driving car, one needs data to train a model to recognize a stop sign. There must be examples of stop signs from all sorts of angles with many different lighting schemes, and then the dataset must have examples of things that are not stop signs so that the model does not mistake a construction sign for a stop sign.

Building a threat detection dataset requires having a balanced amount of pre-identified threats. But threats are not common on a network, the attackers are evading detection, and it takes a skilled analyst to confirm that a threat has been found—all tricky obstacles to overcome.

To understand how tricky a situation this is, imagine trying to build a dataset of images for building a stop sign detector. Imagine though that we are doing this in a world where stop signs are exceptionally rare.

Stop signs jump behind corners every time you try to take their picture, and it requires years of specialized training to tell the difference between a stop sign and a yield sign. The project of building an autonomous vehicle would be hopeless without some creative solutions. Also, driving would be really tricky.

5 Steps to Build Machine Learning Models for Security Teams

Although extremely challenging, overcoming the difficulties associated with building a dataset and training an ML model would bring a huge payoff. Accurate models for threat recognition could dramatically scale out security team capabilities, and such models are more robust than the signature-based queries currently used for threat hunting and detection engineering.

Want to know more about proactive threat detection? Check out our webinar: “Demystifying the Tradecraft of Threat-Informed Defense” >>

Threat Detection through Machine Learning Step 1: Collect Data

The SnapAttack platform is designed first and foremost to provide a research workbench to empower security teams, however the platform’s tools for collecting threat data and processing detections are exactly what is needed to build a dataset for training ML models for threat detection. SnapAttack attack sessions are fully logged and recorded examples of threats, ready to be used as part of an ML training dataset. The collection of threat data, step one towards training an ML model, is already an essential component of the platform.

Threat Detection through Machine Learning Step 2: Label Data

Step 2, labeling and validating the labels, are also already essential features of the platform, being necessary for testing detections and giving the users the ability to filter based on MITRE ATT&CK tags and to score detections. Incorporating labeling into the workflow and having it result in immediate benefit for the user is essential to breaking through step 2 in our list.

Many researchers attempt to create a labeled dataset exclusively for the purpose of machine learning. Such a task is too labor intensive to justify for the purposes of research. Experts will not spend their time with the data if there is no immediate payoff.

In SnapAttack, labeling attacks and logs is a natural step in executing the attack within the platform, and the payoff is immediate since that information is analyzed to calculate detection quality, evasion potentials, and attack variability. The fact that these labels can later be used for training a machine learning model is almost incidental. With SnapAttack, we get the labeled dataset without the heartache and analyst downtime.

Get ahead of threats with proactive threat hunting – check out our webinar: “The Art + Science of Pre-Crime Threat Hunting” >>

Threat Detection through Machine Learning Step 3: Analyze and Curate

Step 3 is understanding the subtleties of the dataset, extracting actionable insights, and curating the data. Truly understanding what is in the dataset and which labels should be grouped together in the case of a multi-class problem is essential.

There are many different types of threats (in ML terms, a multi-class classification problem), and understanding the mathematical and technical features of the dataset as well as how those features can help organize the data in surprising ways is essential for both successfully training an ML model as well as presenting actionable insights to the platform users.

This step of curating and examining the data is often skipped in academic projects, where the researchers obtain a canned dataset like the LANL, KD99, ISCX datasets. The researcher has a set of data and corresponding labels that say “good” or “bad”.

Simply reproducing those answers with an algorithm is usually not the best way to help users, as it ignores the enormous variety of behaviors that could be targeted for detection and the contextual clues that will be helpful for the end user.

Obviously, the curation and analysis process can be tedious, and similar to the labeling problem, it can be hard to justify it solely for the purpose of speculative machine learning research.

Streamline detection development with our webinar: “Breaking Barriers with Detection-as-Code” >>

SnapAttack aims to make its detection and attack session database as easily accessible as possible. To do this we have to analyze the datasets, recognize trends, commonalities, deduplicate, and organize according to log source and MITRE ATT&CK technique tags. For such a large dataset, curation is essential for making it approachable and usable by the user.

As the dataset grows and the connection between analytics and attack sessions are aggregated and discovered, we obtain a clearer picture of what is in the dataset, what is representative of real-world behavior, and what themes and categories are present. Curating the dataset for the users bootstraps us into being able to curate the data for machine learning and to scope out what AI goals are realistic and achievable with the data on hand.

Threat Detection through Machine Learning Step 4: Train and Test Machine Learning Models

At step 4 along the machine learning path, it is time to start testing and training some models to gain some understanding of what will and will not work in a large-scale production model.

At SnapAttack we are already postured to tackle smaller scale problems with ML and AI. We can:

- Create language models to help recommend query terms for specific ATT&CK techniques and log sources

- Provide optimized analytic suites for particular defensive postures and logging strategies

- Extract patterns from the attack sessions to reveal gaps in our dataset’s coverage

- Use pattern mining and learning algorithms to detect narrowly scoped threats with low level system data (like registry logs)

Most of the above are tried and true techniques used in recommender systems, language processing, and data mining. They are intended to accelerate user workflow by tackling narrowly scoped problems with AI and data science.

Providing these features for the user gives us the research cycles necessary to understand which features are most useful for machine learning, what behaviors our data is the most sensitive to, and what behaviors we have enough examples of in the data.

Some behaviors will lend themselves to automated detection, but some techniques from the ATT&CK matrix are just too abstract or context dependent to be good targets for automated classification.

Threat Detection through Machine Learning Step 5: Machine Learning Threat Classification

At the end of the path is a production level ML threat detection model. Our goal for the end of this journey is to be able to construct models that use low-level system processes and relationships that cannot be changed or spoofed to spot malicious activity.

The models will have learned on countless variations of attacks and understood how to identify certain behaviors without relying on things like command line strings that can be obfuscated and directory-executable relationships that can be altered.

Once the path has been fully traversed, we will have a pipeline for creating ML models that allow us to scale out robust and accurate detection across enterprise infrastructure.

Want to learn more about data science in threat detection? Read our blog: “Enabling Effective Threat Detection Through Data Science” >>

Conclusion: Threat Detection Through Machine Learning

The steps required to build a machine learning model are nothing new. What is new is a platform that can power through those steps while delivering benefits to users every step of the way. We think this is one of the only ways to achieve that holy grail of ML based threat detection.

The dataset labeling and gathering strategy must be integrated into a process that provides so much value for an analyst or red teamer that they choose to do it.

SnapAttack is designed to encourage and simplify red and blue team collaboration and while strengthening the information security community through shared data and detections. But along the way, we will be deliberately hitting the milestones along this path, culminating in a more robust threat detection method that can benefit everyone.

SnapAttack was built by CISOs, SOC leaders, and threat hunters for CISOS, SOC leaders, and threat hunters.

By rolling intel, adversary emulation, detection engineering, threat hunting, and purple teaming into a single, easy-to-use product with a no-code interface, SnapAttack enables you to get more from your technologies, more from your teams, and makes staying ahead of the threat not only possible – but also achievable.

Schedule a demo today to see how you can finally answer the question, “Are we protected?” with confidence.